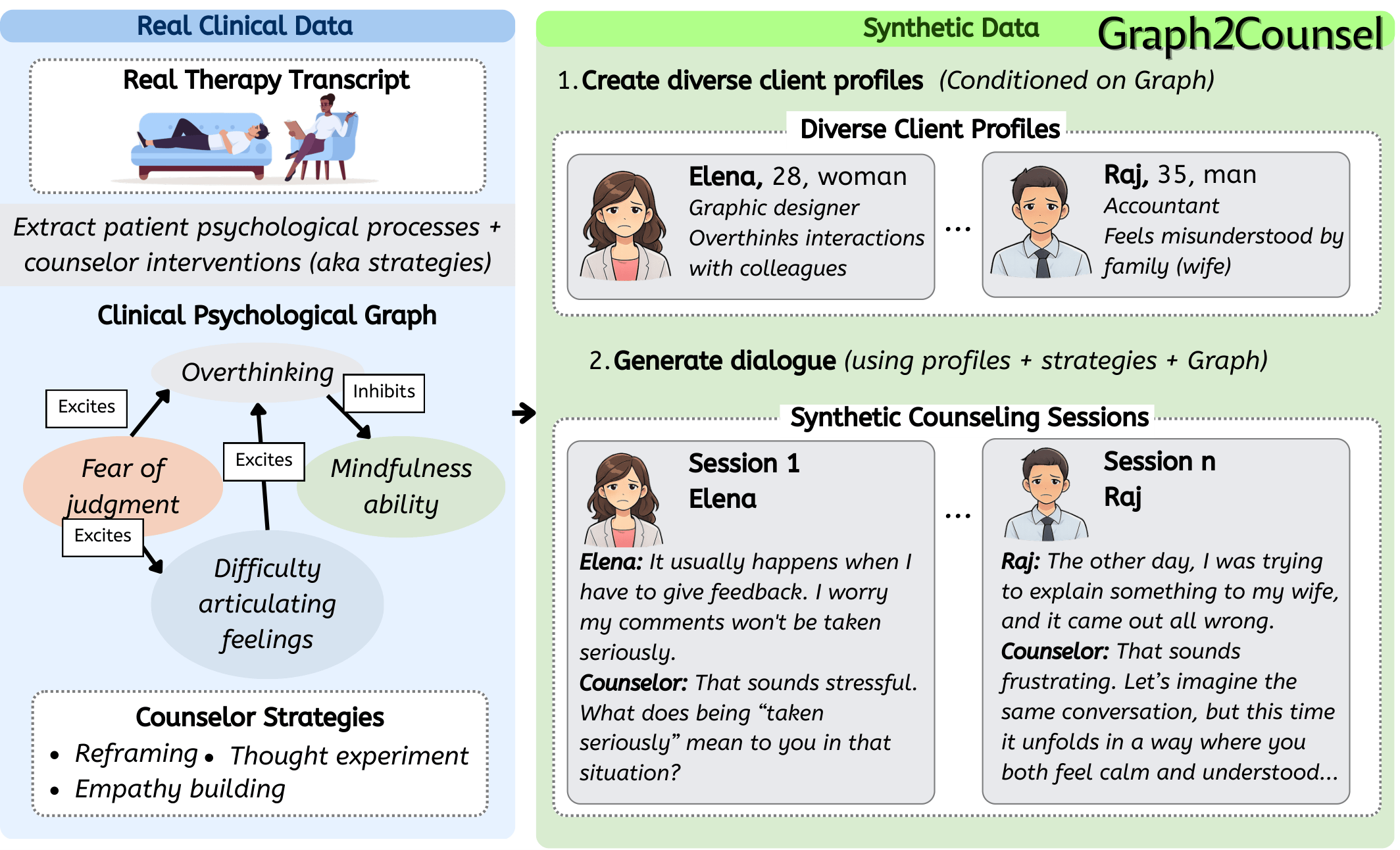

A framework for generating clinically grounded synthetic counseling dialogues from structured client psychological graphs, enabling privacy-preserving training data for mental health AI.

I work at the intersection of privacy-preserving NLP, clinical mental health AI, and dialogue systems. My PhD focuses on generating high-fidelity synthetic therapy sessions that preserve patient privacy. I am also broadly interested in multimodality and representation learning.

A framework for generating clinically grounded synthetic counseling dialogues from structured client psychological graphs, enabling privacy-preserving training data for mental health AI.

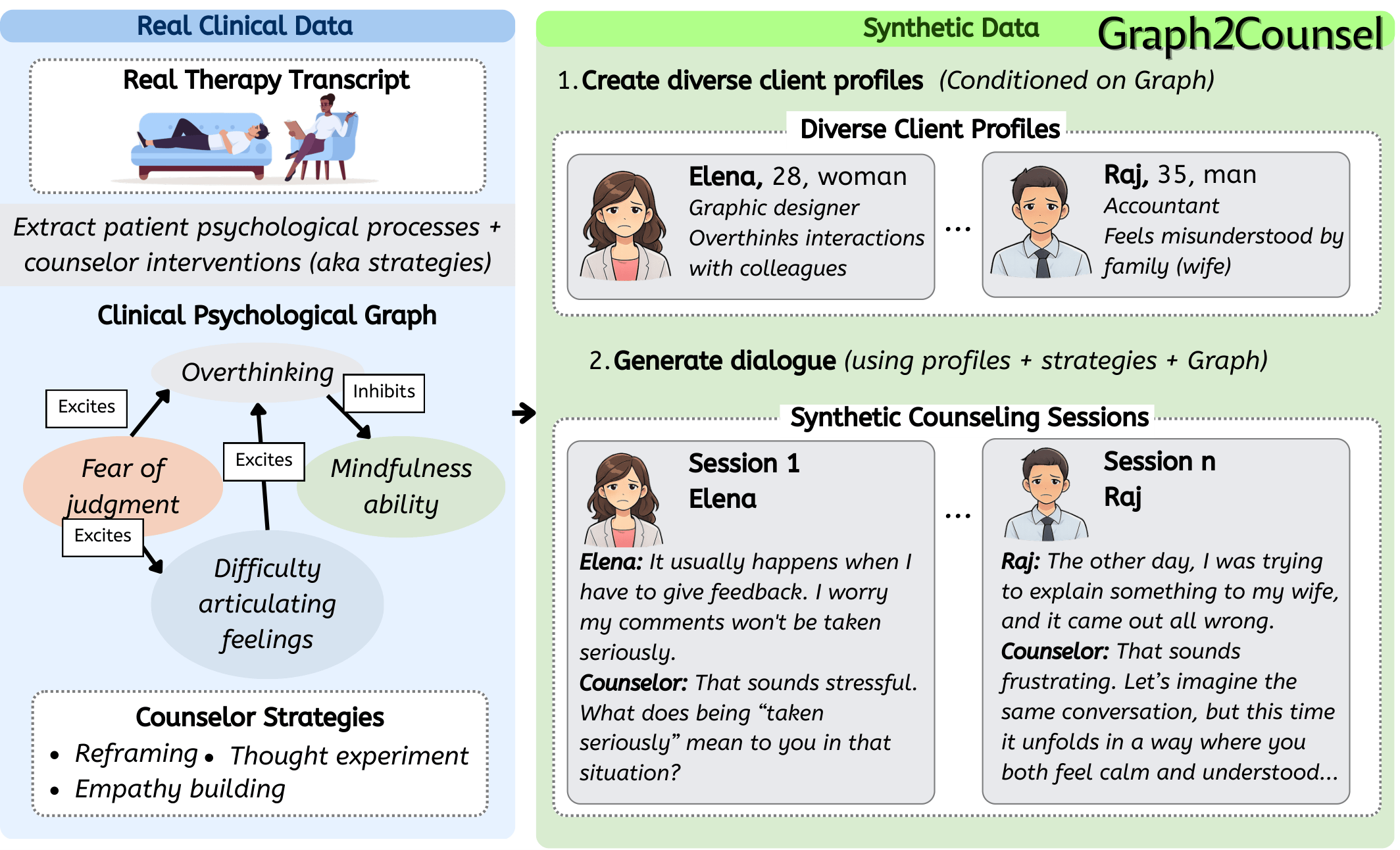

Secure audio processing pipeline for suicide risk assessment from therapeutic exchanges, combining privacy-preserving techniques with clinical NLP.

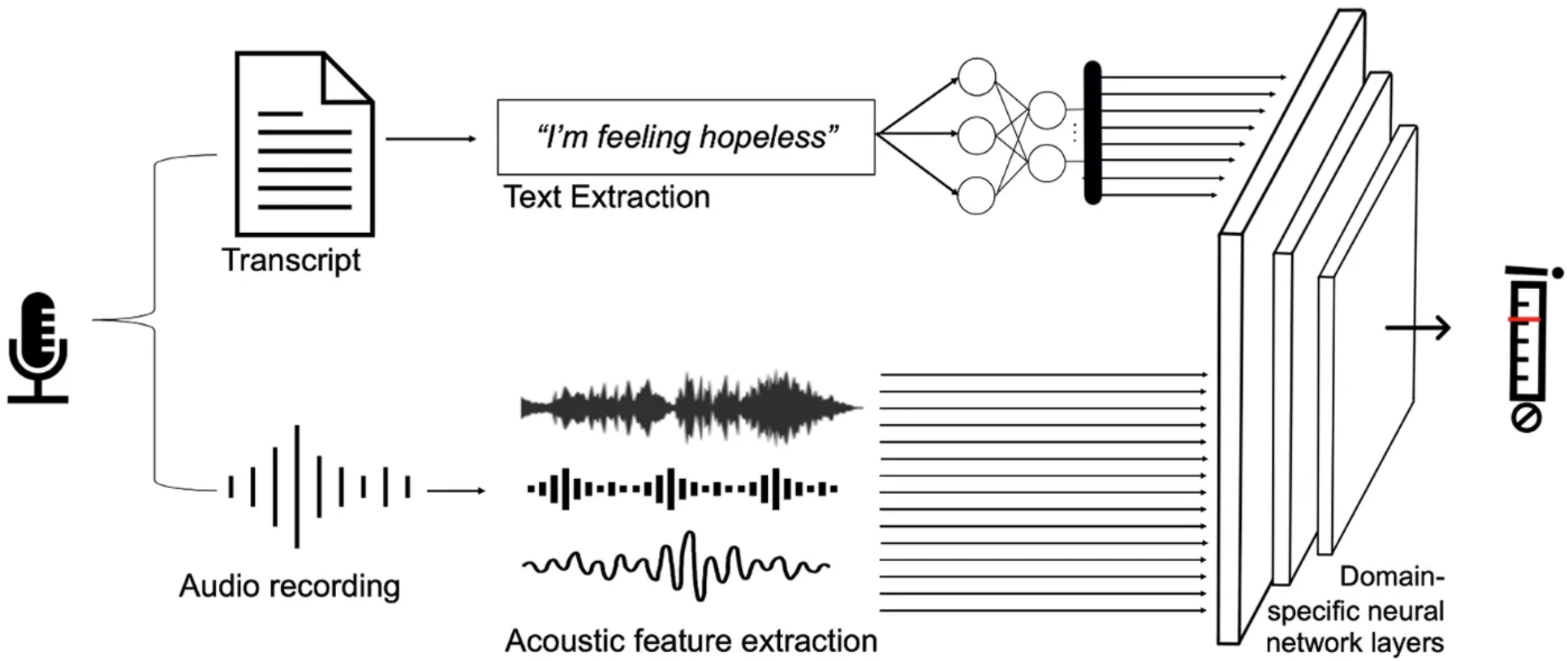

A multi-agent LLM framework for generating synthetic therapy sessions, achieving 3.8% improvement on standard clinical scales and 77.2% expert preference over state-of-the-art datasets.

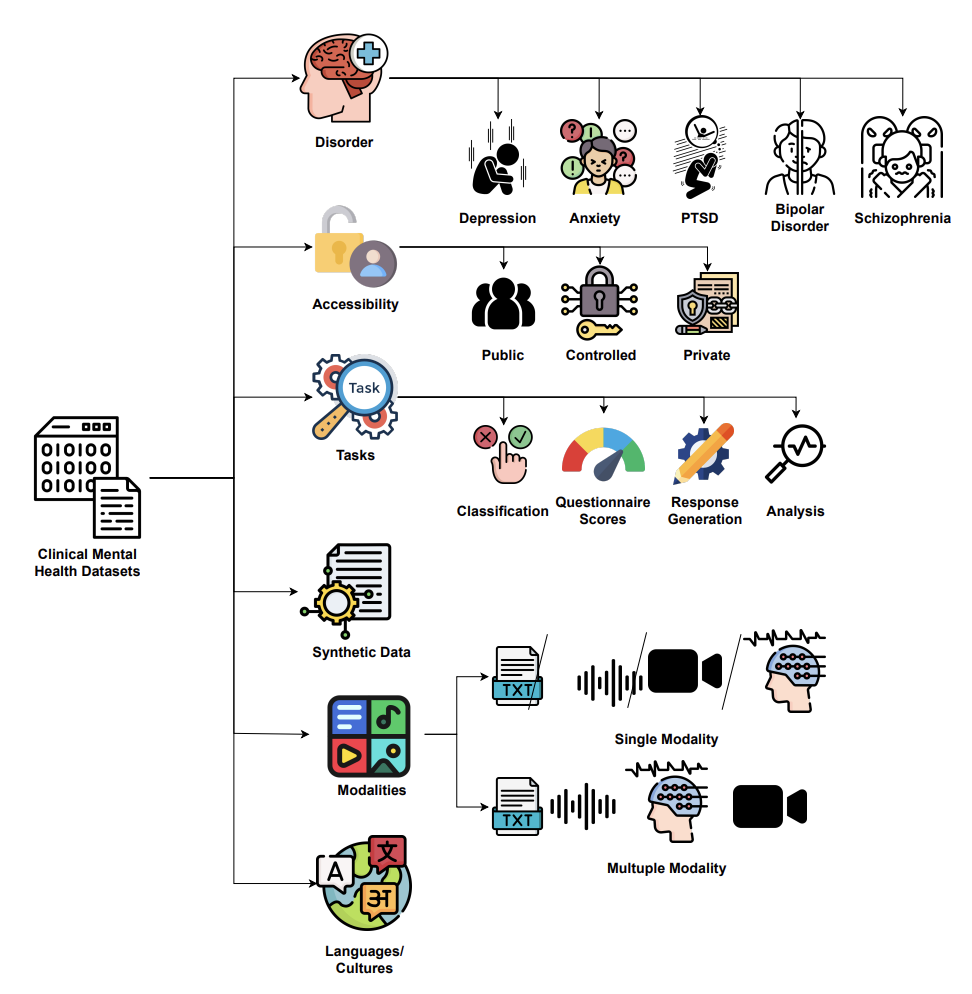

A structured survey of datasets used in clinical mental health AI, covering data collection methodologies, annotation practices, privacy considerations, and benchmark tasks.

Identifies current privacy issues and threats in mental health AI models and datasets. Reviews solutions from literature, evaluation methods, and proposes a pipeline for creating privacy-aware AI models in the mental health domain.

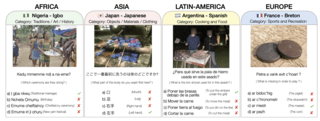

A benchmark for evaluating cultural awareness in multimodal machine translation, covering multiple languages and cultural contexts.

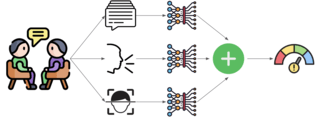

A novel multimodal depression severity prediction framework with a question-aware fusion mechanism and a loss function designed for imbalanced ordinal classification.

A multicultural and multilingual visual QA dataset spanning diverse cultural contexts, with comprehensive benchmarking of current large vision-language models.

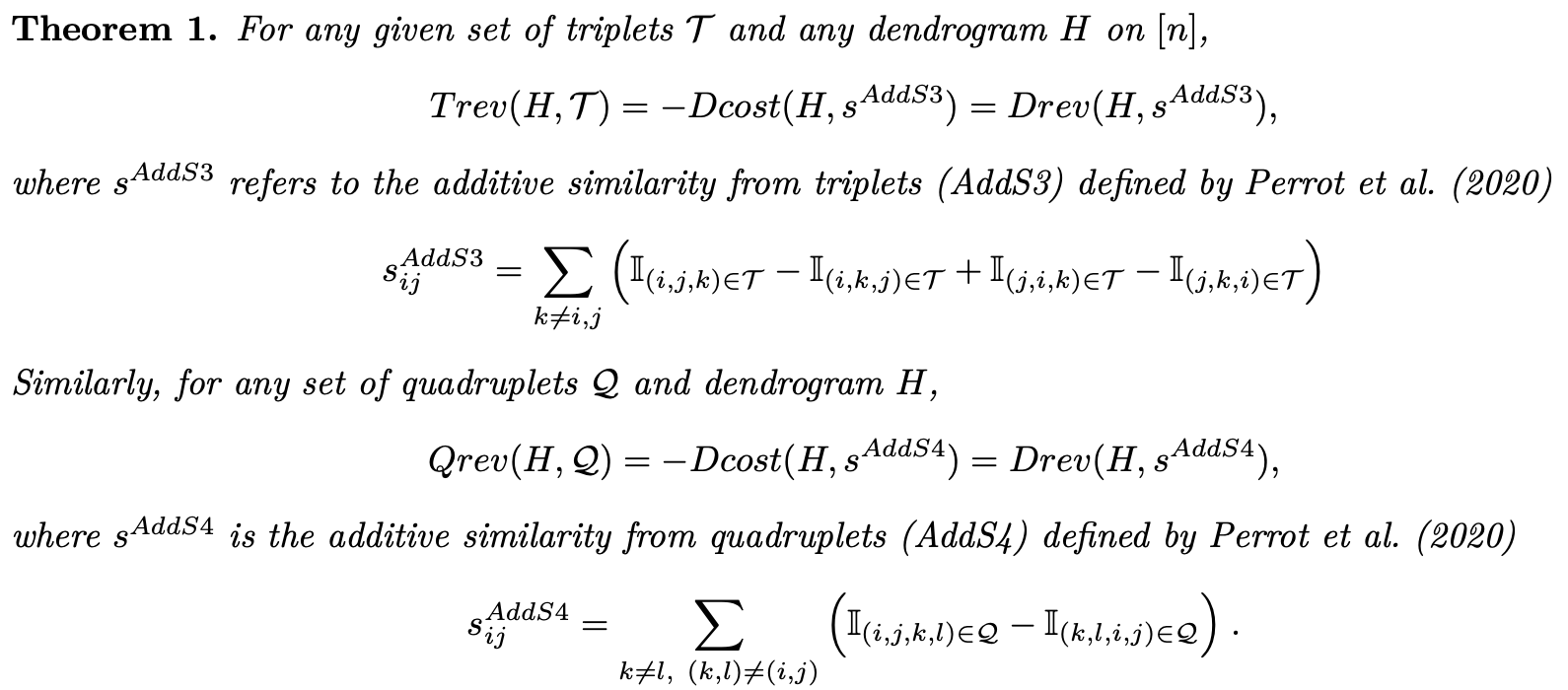

Introduced the first framework to evaluate hierarchical clustering using comparisons. A novel revenue function avoids reliance on pairwise similarities or ground-truth trees, achieving rank correlations of +0.9 with standard quality metrics.

Pretrained dialogue encoder using a structure-aware Discourse Mutual Information (DMI) loss function, achieving state-of-the-art results on dialogue understanding and retrieval tasks.

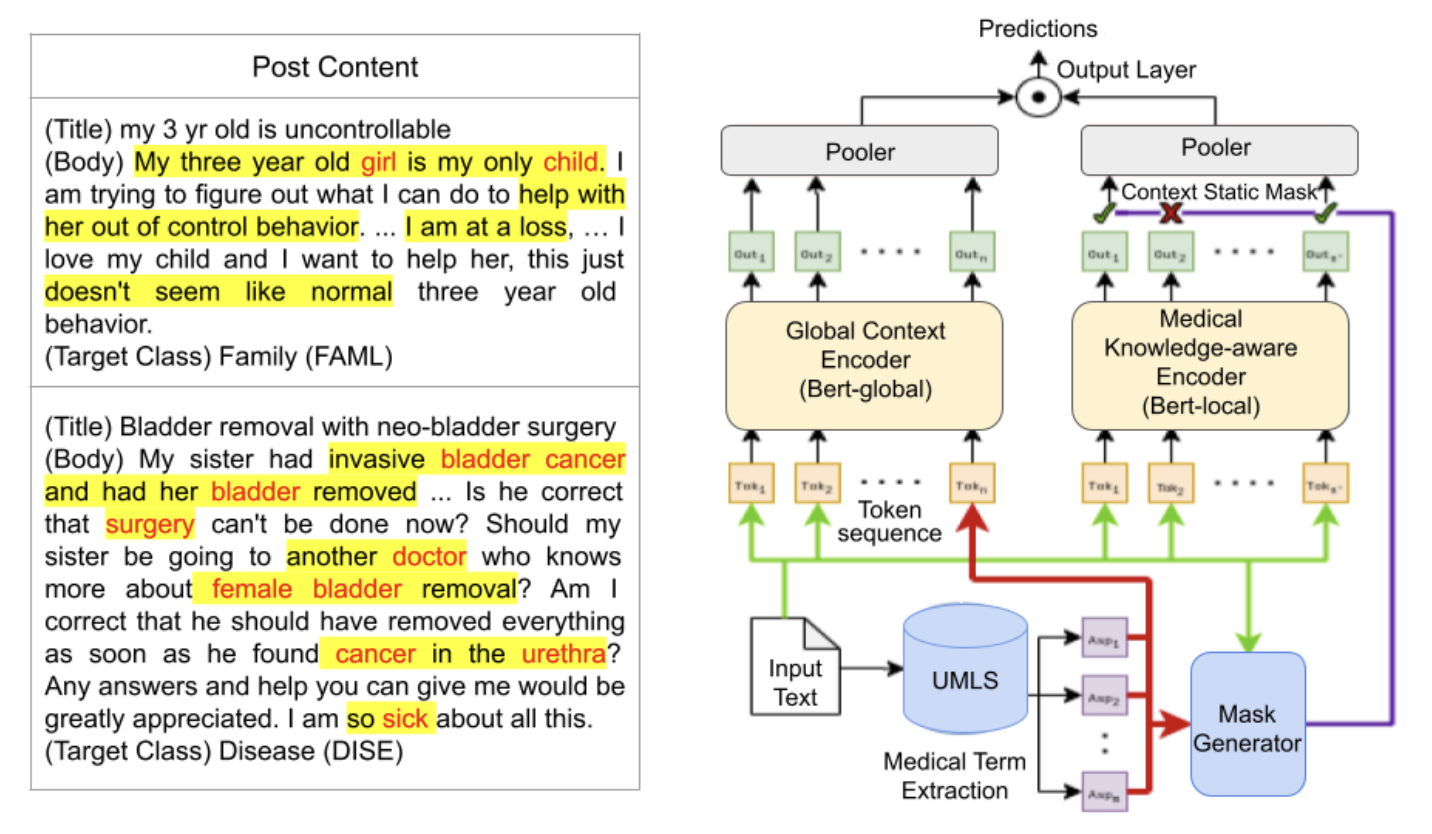

A knowledge-aware BERT model for classifying questions in medical forums, improving accuracy on clinical text classification by incorporating external medical knowledge.

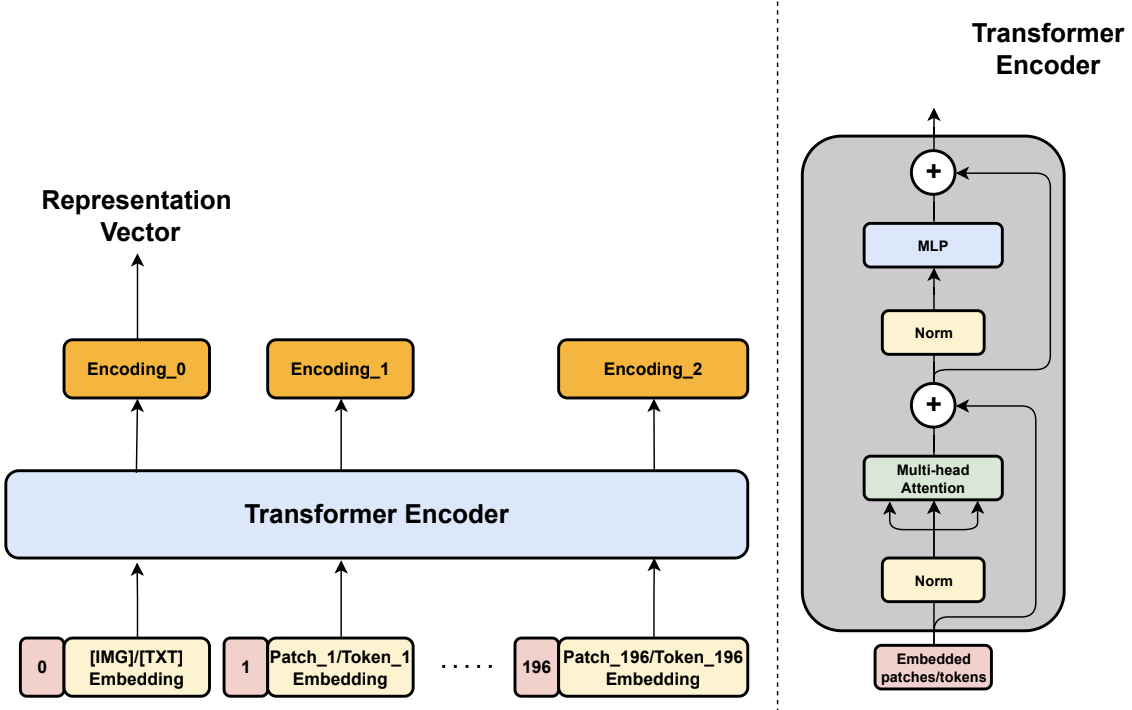

Proposed a novel crossmodal contrastive loss to train a 12-layer transformer for joint image–text embeddings on COCO, achieving competitive performance with Sentence Transformers on text-only tasks.

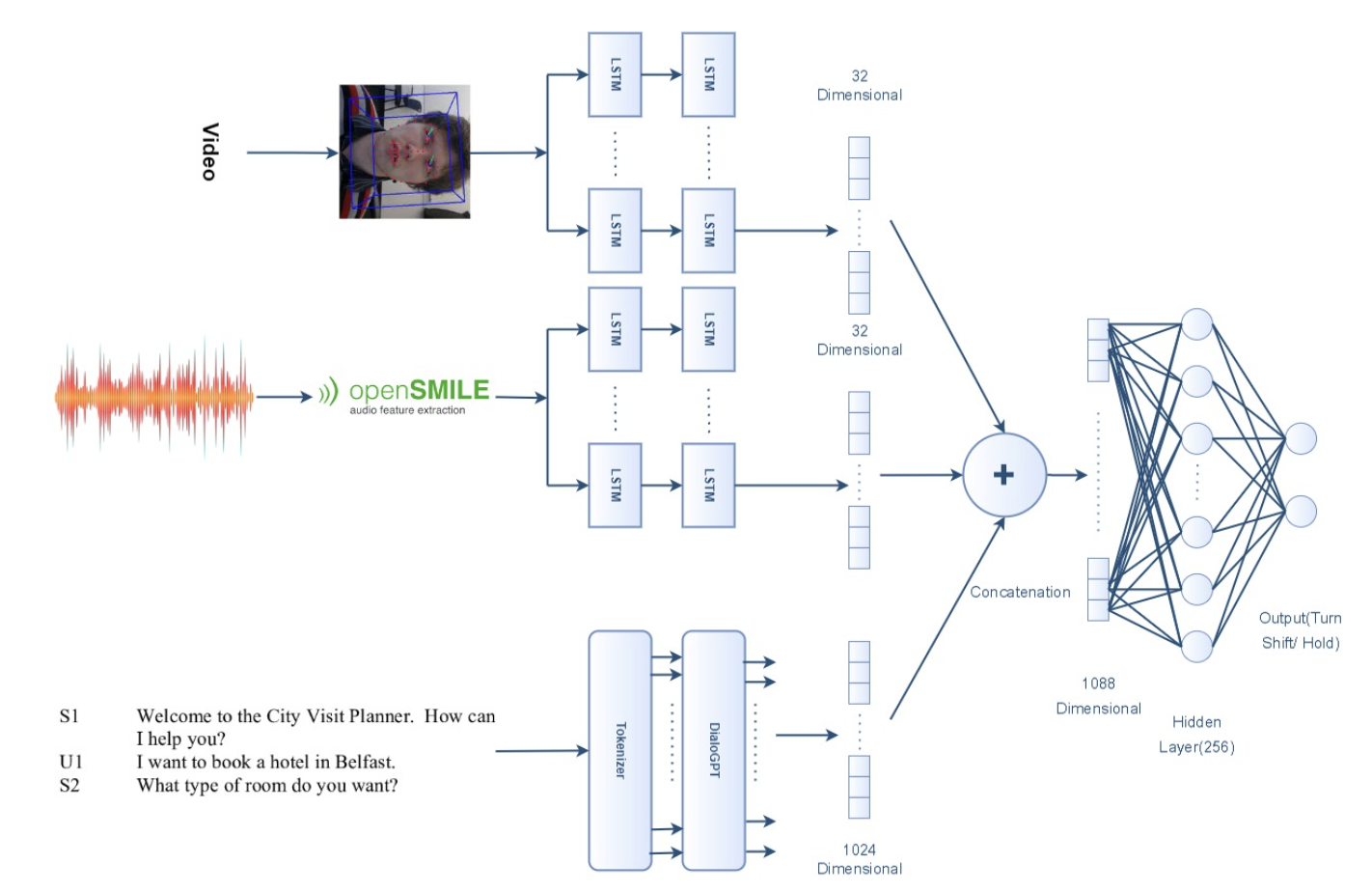

Developed a multimodal turn-taking predictor for conversational agents using LSTMs, attention mechanisms, and a pre-trained transformer to jointly model audio, visual, and text signals. Achieved macro F1 improvement of +0.09 over state-of-the-art models.

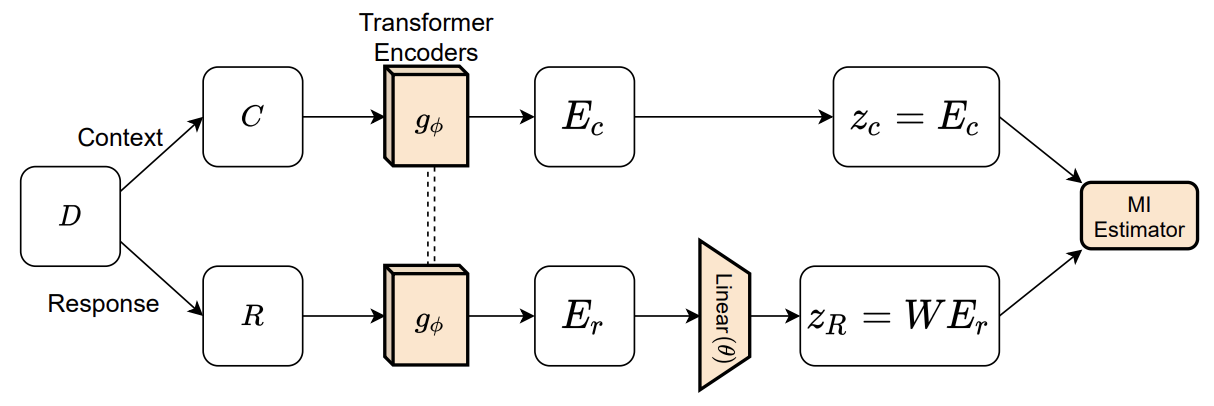

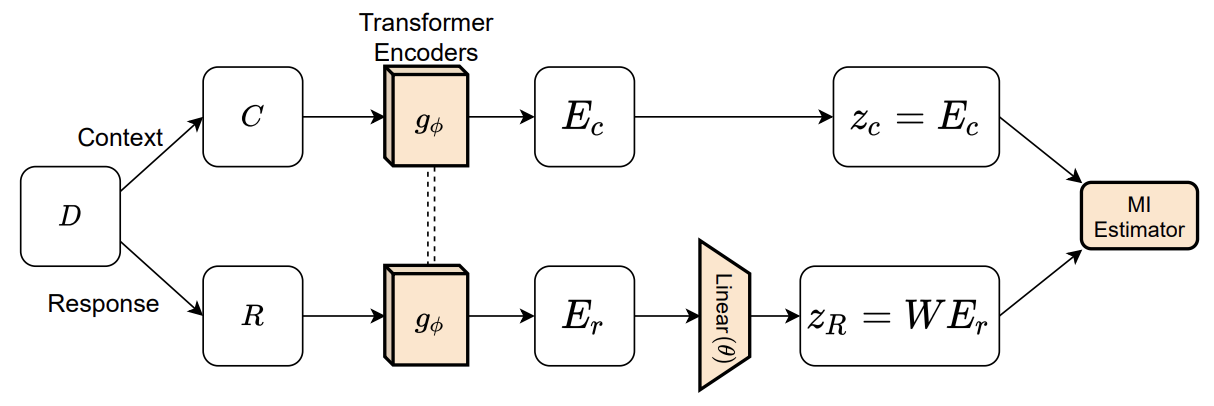

Proposed a novel discourse mutual information loss for a transformer-based dual encoder, plus a mapping algorithm from context to response embeddings. Achieved +4.1% on dialogue classification tasks and +10.6% on dialogue evaluation tasks over state-of-the-art baselines.